Michael Lewis

W. W. Norton & Company, 2016

Amazon

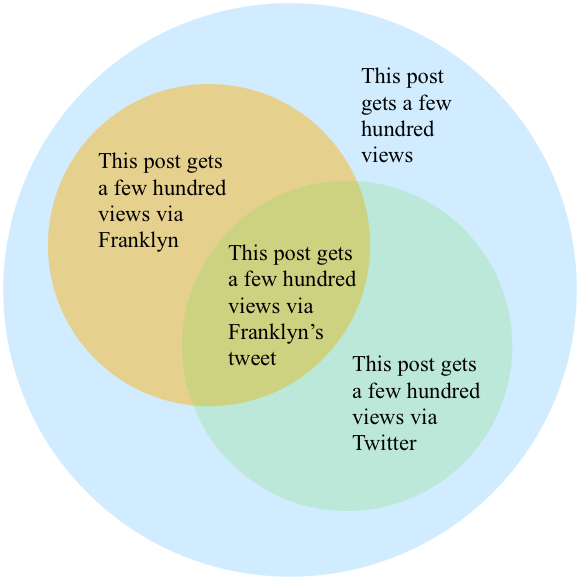

Which is more probable:

- This post will get a few hundred pageviews.

-

My friend Franklyn will tweet this post, resulting in a few hundred pageviews.

If you chose the second option I have a book recommendation for you.

Since the 17th century, economists, mathematicians and philosophers have designed normative theories of decision making. That is, theories of how we should make decisions. These are great for optimizing your own decision making. But they don’t necessarily help you to predict the behaviour of others, because there’s no guarantee that others are following the norms. It wasn’t until the 1960s that significant attention was paid to how we actually make decisions, which turns out to be pretty different from the theorems of formal decision theory.

The Undoing Project tells the story of Israeli psychologists Daniel Kahneman and Amos Tversky. The pair crafted subtle experiments to reveal how we make choices. Though wildly different in demeanour, both were fascinated by quirks of the mind. They shared an impish sense of humour, often directed at the mistakes made by their subjects. Over the course of three decades they developed an intense relationship as creative partners and friends. Together they shaped the course of modern cognitive psychology.

Even before their careers, Kahneman and Tversky led remarkable lives. When Kahneman was a child his family moved from town to town in occupied France, carefully concealing their Jewish identity from Nazi authorities. After the war they settled in Israel, where Daniel would become the country’s first military psychologist. Tversky, born and raised in Israel, was likewise a soldier. His career as a psychologist was interrupted by both the second Arab-Israeli War and the Six-Day War, where he served as a paratrooper.

Their collaboration began at Hebrew University in the late 1960s, where both were rising stars in the psychology department. In Thinking, Fast and Slow, Kahneman frames their research program by analogy to language acquisition:

At age four a child effortlessly conforms to the rules of grammar as she speaks, although she has no idea that such rules exist. Do people have a similar intuitive feel for the basic principles of statistics?

It turns out the answer is “no.” The two main sources of error are “heuristics” and “framing.”

In trying to determine if a scenario is probable, we don’t perform explicit computation. Instead, we use heuristics – general rules that allow us to ballpark probabilities without invoking any math. These heuristics work in some cases, but in others they lead to systematic errors.

Framing refers to the way in which choices or situations are presented. Subtle changes in presentation can lead to major changes in behaviour. Lewis explains framing theory as the recognition that “people did not choose between things. They chose between descriptions of things.”

The pair covered a lot of ground and I can’t do it justice here. Lewis lays it out in laudable detail, so if you want the full lowdown you’ll have to buy the book. I also recommend looking into Kahneman’s book, as well as their papers from 1974 and 1979. One example I do want to relay is the representativeness heuristic, which you may have used in the leading question of this post.

The representativeness heuristic works as follows: suppose we’re trying to estimate the probability that some object X belongs to some type T. What we tend to do, argue Kahneman and Tversky, is gauge the degree to which X is representative of T. Prima facie this sounds okay, but there are a number of ways it can lead to error. One is that it results in a tendency to throw away background probabilities. In one experiment, subjects were told that a sample of people contains 70 engineers and 30 lawyers. When asked the probability that a random person drawn from the sample is a lawyer, the subjects correctly answered 30%. But given a generic, uninformative description of a person from the sample (Eg “Married, no kids, high ability, high motivation, well liked by colleagues”), the subjects said there’s a 50/50 chance this person is a lawyer – completely throwing away the information about the distribution of the sample. The description doesn’t give you any new information, so you should stick to the base rates. But since the description is equally representative of a lawyers and engineers, the heuristic leads people to an erroneous result.

Returning to the opening question: the first option has to be more probable, because it includes the second option. It’s the second option plus a bunch of other stuff.

By filling in details, I make the second option more representative of The Kind of Thing We Expect to Happen. But at the same time, the details make it less likely. Each detail added to a description of an event precludes all the possibilities that don’t include that detail. The exact feature that makes option two less likely in reality makes it seem more likely in our heads.

This is called the conjunction fallacy. To be fair, I should mention that it has attracted some controversy. One critic is Gerd Gigerenzer, who Lewis mentions briefly. Gigerenzer claims that these experiments exploit “conversational implicature.” In context, the argument goes, what I’ve specified as option one could reasonably be interpreted as “this blog post inexplicably gets a few hundred pageviews” – an event perhaps less likely than option two. But experimenters have implemented various controls, and even in strict settings subjects make the same error.

Experiments like this made Kahneman and Tversky stars in the world of academia. When they left Israel in the 1970s, they were offered jobs at top American schools. Soon their influence would extend beyond the psychology department. Several economists believed these results had ramifications for their own models, which tend to assume that people are good intuitive statisticians and rational decision makers. “Behavioural economics” would became a burgeoning research field. For his work in founding it, Kahneman was awarded the Nobel prize for economics in 2002.

Not everyone shares such a high regard for behavioural economics. Unsurprisingly, the chief critics are traditional economists, many of whom were nonplussed when their Nobel prize went to a psychologist. One explanation for this would simply be defensiveness. Another would be cultural differences between psychologists and economists. Lewis quotes Amy Cuddy’s explanation: “psychologists think economists are immoral and economists think psychologists are stupid.”

But the criticism from economic quarters is not quite so shallow. It largely stems from a sense that it’s still unclear what to do with the results of Kahneman and Tversky’s surveys. They’ve identified a flaw in the theory (and you can find a concrete example in almost every domain – here is a particularly brutal takedown of homo economicus) but they haven’t offered a model that makes more accurate predictions in the general case. You can find a lot of clickbait headlines claiming that behavioural economics would have prevented the 2008 financial crisis, but you probably won’t find a convincing explanation of how it would actually have helped. Scott Sumner provides a critique.

Science is a competitive enterprise. Your theory doesn’t have to be perfect, it just has to be better than the others. Finding counterexamples to the best available theory isn’t enough to supplant it. So until behavioural economics finds positive application in macroeconomics, what can it do for us? I think there are three important areas that elude Sumner’s criticism.

The first is the structure of public policy. In light of Kahneman and Tversky we know that how options are framed affects which option people tend to choose. So naturally we should bring this understanding to bear on how we present choices to people. A common example is opt-in versus opt-out architectures for services. You’ll typically see a strong bias toward the default – and you should be aware of this in the design process. This idea, dubbed “choice architecture”, has been explored in depth by Cass Sunstein. (The Undoing Project was actually inspired by Richard Thaler and Cass Sunstein’s review of Moneyball, which pointed out that many of the errors of human judgement that Lewis discussed had already been studied extensively by psychologists.)

Secondly, people who make products have a lot to learn from Kahneman and Tversky. Opt-in versus opt-out architectures are just as relevant to web applications as they are to social services. Kahneman’s book has become a favourite with the design crowd. A sensitivity to the effects of framing can help you run better conversion experiments and user tests, and better explain their results.

The last is in the mundane choices that make up the bulk of our lives. Whenever we’re solving a problem – no matter how small – we can benefit from the meta-awareness required to recognize whether we’re solving the right problem. Is our target a genuine problem, or just a fiction we’ve invented? If the question was framed differently, would our answer change?

“It was as if he had been assigned to take apart a fiendishly complicated alarm clock to see why it wasn’t working, only to discover that an important part of the clock was inside his own mind.”.

Lewis has extraordinary range. He can seamlessly shift topics from Israeli history to Gestalt psychology; from regression analysis to Charles Barkley’s thoughts on “idiots who believe in analytics.” His description of the duo’s experiments is rigorous – I’ve read the 1974 and 1979 papers, and excepting the theory section of the latter, no important detail is sacrificed.

Science textbooks and journal articles tend to be woefully ahistorical. Biographies and popular science books often emphasize history over science. It’s uncommon for a book to do both well, but this one does.