SAT is hard, but there are algorithms that tend to do okay empirically. I recently learned about the Davis-Putnam-Logemann-Loveland (DPLL) procedure and rolled up a short Python implementation. continue reading

Tag Archives: python

active learning

Active learning is a subfield of machine learning that probably doesn’t receive as much attention as it should. The fundamental idea behind active learning is that some instances are more informative than others, and if a learner can choose the instances it trains on, it can learn faster than it would on an unbiased random sample. continue reading

building a term-document matrix in spark

A year-old stack overflow question that I’m able to answer? This is like spotting Bigfoot. I’m going to assume access to nothing more than a spark context. Let’s start by parallelizing some familiar sentences. continue reading

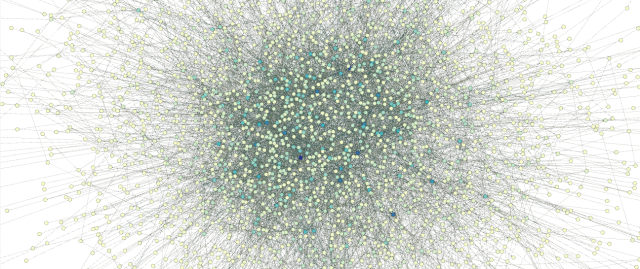

visualizing piero scaruffi’s music database

Since the mid-1980s, Piero Scaruffi has written essays on countless topics, and published them all for free on the internet – which he helped develop. You can learn more about him (and pretty much anything else that might interest you) on his legendary website. continue reading

the lowest form of wit: modelling sarcasm on reddit

A while back Kaggle introduced a database containing all the comments that were posted to reddit in May 2015. (The data is 30Gb in SQLite format and you can still download it here). Kagglers were encouraged to try NLP experiments with the data. One of the more interesting responses was a script that queried and displayed comments containing the /s flag, which indicates sarcasm. continue reading